One Poll Respondent Could Determine Participation in the June Democratic Debates

The 2008 movie Swing Vote tells the story of a man (played by Kevin Costner) whose ballot is inserted into the voting machine on election day but not counted because the machine is accidentally unplugged. It turns out that without this vote, the election in New Mexico is tied and neither presidential candidate has a majority in the Electoral College. So both candidates (Kelsey Grammer and Dennis Hopper) come to New Mexico to court this now-important man, on whose vote the fate of the country resides.

The Democratic National Committee’s (DNC) procedures have put some of the candidates who want to participate in the June debates in the same position as Kelsey Grammer and Dennis Hopper. Depending on what happens with polls that might come out between now and Wednesday, the fate of some of them might hinge or have already been determined by one, or a handful of, poll respondents.

The DNC published the criteria for being eligible to participate in the first debate on February 14. Candidates must meet criterion A, criterion B, or both, by June 12.

A. Demonstrate that the candidate “has received donations from at least (1) 65,000 unique donors; and (2) a minimum of 200 unique donors per state in at least 20 U.S. states.”

or

B. “Register 1% or more support in three polls (which may be national polls, or polls in Iowa, New Hampshire, South Carolina, and/or Nevada) publicly released between January 1, 2019, and 14 days prior to the date of the Organization Debate. Qualifying polls will be limited to those sponsored by one or more of the following organizations/institutions: Associated Press, ABC News, CBS News, CNN, Des Moines Register, Fox News, Las Vegas Review Journal, Monmouth University, NBC News, New York Times, National Public Radio (NPR), Quinnipiac University, Reuters, University of New Hampshire, Wall Street Journal, USA Today, Washington Post, Winthrop University. Any candidate’s three qualifying polls must be conducted by different organizations, or if by the same organization, must be in different geographical areas.”

If more than 20 candidates qualify under method A or B, then the tie is broken in favor of candidates with the highest polling averages. Specifically, the “‘highest polling average’ is calculated by adding the top three polling results for each candidate (using the top-line number listed in the original public release from the approved sponsoring organization/institution, whether or not it is a rounded or weighted number), and dividing by three. The resulting number will be rounded to the nearest tenth of a percentage point.”

I’m not going to comment on whether the DNC’s criteria are the “right” ones or whether they should be restricting candidates at all. Instead, let’s look, statistically, at whether the procedure achieves what appears to be its goal, of limiting the debates to candidates who obtain donations from at least 65,000 people or who are supported by at least one percent of the electorate.

I downloaded the spreadsheet of poll results from Zach Montellaro’s June 6 column at politico.com on recent developments and DNC decisions for inclusion in the debates.

Rules of the game

The DNC lists organizations whose polls are eligible. But do all polls from these organizations qualify? Who should be in the denominator of the polling percentage? All Americans? All registered voters? All Democratic registered voters? All Democratic or Democratic-leaning likely voters? Something else? If a poll reports results for different subsets of the population, say one percentage from all respondents and a different percentage from Democratic registered voters, which one should be used? Should both count? What if the majority of a candidate’s support is from registered Republicans? The original guidelines do not say.

Montellaro reported that the DNC decided to exclude two polls from ABC News/The Washington Post even though those organizations were in the approved list in the February guidelines. These polls asked about candidate support using an open-ended question.

There are many ways in which one can ask the question about which candidate is supported. The ABC News/Washington Post polls asked respondents to volunteer the name of the candidate supported (if any) when answering the question: “If the 2020 Democratic primary or caucus in your state were being held today, for whom would you vote?” Respondents could name anyone: some named Michelle Obama or Oprah Winfrey (neither of whom, to my knowledge, is seeking the 2020 nomination). This is called an open-ended question because the set of possible answers is left open for the respondent to decide; the pollster does not provide a list of candidates to choose from. The other polls, by contrast, asked a closed-ended question, in which respondents were read a list of candidates and asked which one they supported. A closed-ended question often has an option for “someone else” or “none of the above,” but most people tend to choose one of the names on the list.

The University of New Hampshire poll in April compared percentages of support obtained from an open-ended question with those from a closed-ended question. About 40% of respondents asked the open-ended question were undecided or could not name a candidate. When presented with a list, however, only 12% said they were undecided, and almost no one said they supported a candidate other than those listed. Most candidates received higher percentages of support with the closed-ended question than the open-ended question (because more people answering the closed-ended question picked a candidate). One would expect, in general, that it would be more difficult to reach a threshold of support with an open-ended question than a closed-ended question.

Influence of one poll respondent

As of June 8, several candidates are “on the cusp” of inclusion in the debates. If new polls come out, a tie-breaking mechanism may be needed.

Of course it is always true that one vote can make a difference in a close election. That’s the entire premise of the movie Swing Vote, and you can no doubt think of several close elections that came down to a handful of votes.

Elections are intended to be a census of voter intention. And election results are not error-free; many recounts give a different tally than the original count. Poll results, however, come from a small sample of voters. One sample will yield different percentages of support than another sample even though both samples are drawn from the same population of voters.

Consider a candidate who has surpassed the 1% threshold in two polls. Now poll number 3 comes along, with a sample of size 300. The candidate is ineligible for the debate if supported by two respondents in this poll but becomes eligible if supported by three. With so many candidates and a small sample size, many candidates may be in the position where their inclusion depends on one or two poll respondents even though they might be well-liked (perhaps a second or third choice) by voters.

What about the poll quality and sample size?

The POLITICO spreadsheet helpfully included links to the pollsters’ data releases, and I looked up their sample sizes and methodologies. They use a variety of methods. Some are telephone polls (which now call both landline and cell phone numbers); others poll persons who have opted to participate in an online panel. Some of the telephone polls use random-digit-dialing (they generate telephone numbers at random to call); others call persons from a list of registered voters. Some are in English only; others are conducted in English and Spanish. The polls use different methods to weight the data and to determine who is likely to vote.

One feature is, unfortunately, shared by all the polls: a very low response rate. Of the polls I checked, only the ABC News/Washington Post polls — the ones now excluded by the DNC — reported response rates. But we know from other reports that response rates for most polls are in the low single digits, and that most people called for a poll either do not answer the phone or refuse to participate. Pollsters use statistical models to infer results for the nonrespondents from the respondents’ data, but no one knows how well those models work until the election takes place. It’s possible that models that worked well for previous elections might not perform as well in a primary election in 2020 with lots of candidates.

For most of the polls, the number of persons asked about support of Democratic candidates was relatively small, about 300 or so. This results in a margin of error in the range of about six to ten percentage points for a candidate supported by about 50% of the voters (the margin of error is smaller for candidates with less support). The margin of error does not include errors from nonresponse or other inaccuracies in the data. But even without considering these additional sources of error, the difference between a candidate polling 1% and one polling 2% is pretty much noise.

The DNC’s tie-breaking mechanism calls for looking at the average of a candidate’s three highest poll results. But, even if one wants to use an average, should all polls be considered equally? Should a poll that interviews 50 registered Democrats count the same toward the average as one that interviews 2,000 registered Democrats? Should a poll conducted in January listing a small subset of the candidates count as much as a poll in May that lists all of them? Should a poll with a response rate of one-half of one percent count as much as one with a response rate of ten percent? Would it be better to use a weighted average, that weights by sample size or margin of error or another measure of poll quality?

Candidates are listed in different numbers of polls

Finally, let’s explore an issue mentioned in the previous paragraph in more depth. The DNC is now including only closed-ended polls. But the lists do not contain the same sets of candidates. A candidate listed in more polls has a higher chance of meeting the poll criterion — that is, achieving at least one percent support in at least three polls — than a candidate listed in fewer polls.

Let’s look at a simple example. Suppose that, if we could determine the intention of every single Democratic primary voter in the U.S. population, we would find that candidate Y is supported by exactly one percent of the electorate.

Whether Y is included the debate, however, depends on how many polls list Y as one of the candidates. Suppose that each poll takes a simple random sample of 300 voters, and everyone who is asked to participate does so and gives an accurate answer about his or her preference. Different polls, though, will get different number of respondents supporting candidate Y just because different people were randomly chosen for the samples. One sample might have three people supporting Y; another might have five; another might have no one. Overall, we expect about 58% of the possible samples to have three or more persons supporting Y (you can work this probability out yourself if you’ve taken Stat 101), and thus count as one of the polls qualifying candidate Y for the debate.

If Y is listed in only three polls, each one of those polls must have three or more persons supporting Y for Y to qualify for the debate; the probability that this occurs is approximately 0.19, so if Y is listed in only three polls Y has about a 19% chance of qualifying for the debate. If Y is listed in eight polls, however, Y can still qualify for the debate even if some of the polls have fewer than three supporters of Y. The probability that Y will get three or more supporters in at least three out of eight polls is much higher — close to 0.94.

The middle graph of Figure 1 shows the probability that Y, who in reality is supported by 1% of the population, will meet the criterion of getting at least 1% support in at least three polls, as a function of the number of polls that list Y as a candidate. Of course Y has no chance of meeting the criterion if Y’s name is listed in fewer than three polls, and the chance of being included in the debate increases with the number of polls that list Y.

Figure 1. Probability that candidate meets criterion for being included in the debate, as a function of the number of polls that include the candidate’s name.

But now look at what happens with Candidate X (the graph on the left in Figure 1), who is supported by only one-half of one percent of the population — half as many people as Candidate Y. Candidate X is more likely to meet the polling criterion than Y if Y’s name is mentioned in three polls but X’s name is mentioned in ten polls, even though fewer people in the population support X. Similarly, candidate Z (in the rightmost graph of Figure 1), supported by two percent of the population, may be less likely to be included in the debate than Y if Z is mentioned in three polls but Y is mentioned in seven or more polls.

And what if a tie-breaker is needed? The procedure in event of a tie is to take the average of each candidate’s three highest polls. Let’s look at the expected value of this statistic for candidates X, Y, and Z. The expected value of the average of the three highest polls, like the probability of exceeding the three-polls-with-at-least-one-percent-support threshold, depends on how many polls list the candidate’s name. If candidate Y, who is supported by 1% of the population, is listed in only three polls then we would expect the average percentage support from those three polls to be 1%. But, as the number of polls increases, the expected average support in the three highest polls increases too, because we’re discarding more of the polls where by chance the candidate got low support. If candidate Y is listed in ten polls, the expected value of the average of the three highest polls is about 2%.

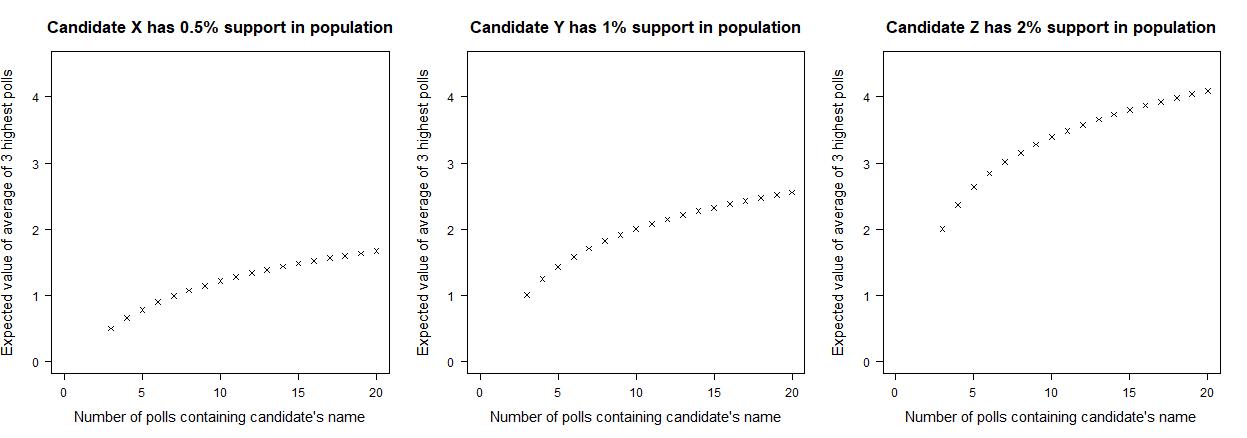

Figure 2 shows the expected average of the three highest polls for candidates X, Y, and Z as a function of the number of polls listing the candidate’s name. Again, candidate X has the lowest support in the population among the three, but, if included in all the polls, is expected to have a higher average from the top three polls than candidate Y (who has more population support) if candidate Y is in only four polls.

Figure 2. Expected value of the average percentage supporting the candidate from the three highest polls, as a function of the number of polls listing the candidate’s name.

The DNC’s procedure thus gives an advantage to candidates whose names are listed in more polls. Even if two candidates are supported by exactly the same number of people in the population, the candidate featured in more polls has a greater chance of exceeding the one-percent-in-at-least-three-polls threshold for inclusion in the debate and, if a tiebreaker is needed, is likely to have a higher score on the statistic used to break ties.

My simple example assumes that Y’s supporters do not switch to another candidate when Y is not listed. In reality, though, a respondent may choose the second- or third-choice candidate if the first choice is not listed, which would give candidates listed in more polls an even greater advantage for meeting the polling thresholds.

Why allow support of just one candidate?

The DNC’s method of determining who participates in the debates has a number of problems from a statistical viewpoint. The procedure given in the February guidelines provides ambiguous guidance as to which polls and estimates are to be included. Moreover, even if it is desired to limit participation to those exceeding a preset threshold of public support, some candidates are advantaged relative to others because they have been included in more polls. Excluding the open-ended polls exacerbates this problem.

A high percentage of Democrats in open-ended polls state they are undecided (35% in the April ABC News/Washington Post poll). Asking persons to choose one candidate among a large field gives a narrow view of voter preference. Many voters may still be deciding among multiple candidates (after all, the first primary elections and caucuses are several months away), and polls that ask whether respondents find individual candidates acceptable or unacceptable, or ask respondents to rank candidates in order of preference, may give a more nuanced picture than those that ask about a unique preferred candidate.

And perhaps candidates who poll low now might see their support increase later in the primary season. The NBC News/Wall Street Journal poll released in June 2015 had candidate Jeb Bush in the lead of a crowded Republican primary field with 22 percent support, followed by Scott Walker with 17 percent. Donald Trump’s support in that poll? About one percent.

Copyright (c) 2019 Sharon L. Lohr